Floating Utopia Act 2

Julia Vergazova, Nikolya Ulyanov

Machine learning, mixed media

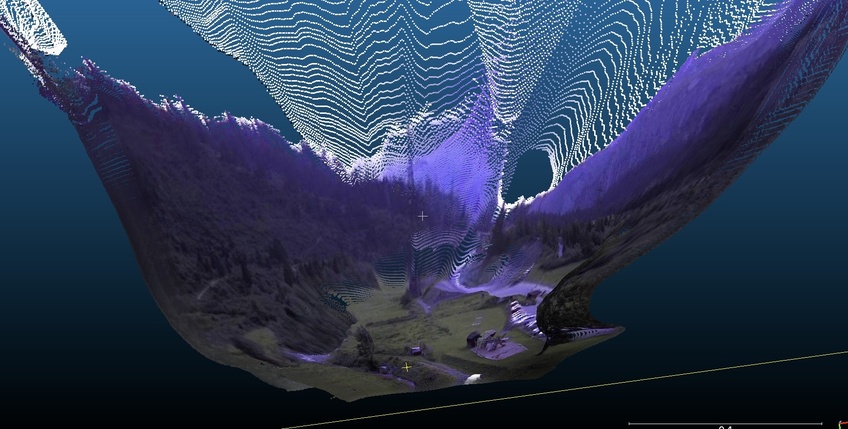

Screenshot from ZECHE virtual environment and exhibition, New Now Festival, Essen, Germany, 2021

Conceptual description

Floating Utopia is a physical and virtual environment and ecosystem that lives and expands with new scenarios. Each scenario is realized in various digital and physical forms - website, VR space, video, objects, events. The geological properties and surfaces of the created environment will become the results of machine terraforming, they will be reconstructed through the visible and invisible representations of attentive machines about the spaces of reality.

Everything solid and material is rapidly melting into data, increasing the number of options for describing the present and predicting the future. Various data sets are involved in this, e. g. tracking animal migration, the rate of world ocean rising, the behavior of social media users, predicting the dynamics of epidemics and the likelihood of natural disasters. Melting glaciers open up new opportunities for trading algorithms, future hurricanes raise CAT bond rates, endangered species are turning into speculative financial and political products.

Data collection has become a global and dominant form of re- source extraction. The modern perception of information is for the most part a machine perception.

Reality is semi-real. The boundaries of its biological and digital body are similar to the surface of swamps, in terms of their blurriness - zones of geological instability, floating boundaries between land and water, and, at the same time, natural ecotones - sources of biodiversity and places of stress, where ecosystems collide and various ways of species coexistence occur.

The digital ecotone of the restoration of nature and species is big data, because the environment ends, depletes, it is burnt out and is pumped into a digital representation of strange devices about it. In this work, we want to visualize the invisible world with which they hallucinate (footage from surveil- lance cameras, data from recognition systems) and make a digital reenactment of nature in order to return equal access to it, without making it the privilege of certain groups.

The resulting reconstructions are hybrid patches, inhabited by a variety of myths and strange inhabitants - datasets involved in rehearsing the end of the world and visualizing its restoration. While living organisms on Earth are gradually fill- ing with plastic, a new (bio) diverse utopia is being written by machines.

Artwork technical description, research, point clouds

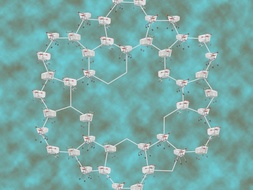

To create three-dimensional reconstruct- ed landscapes that can remain in virtual format or be printed on a 3d-printer, we transform flat images of surfaces and landscapes into 3d-clouds using machine learning methods - 3d-photo-inpainting neural network.

Example 1

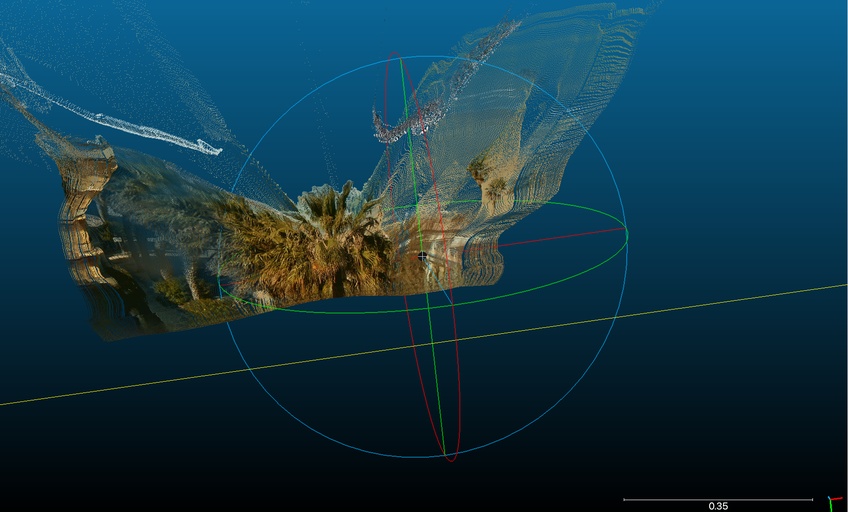

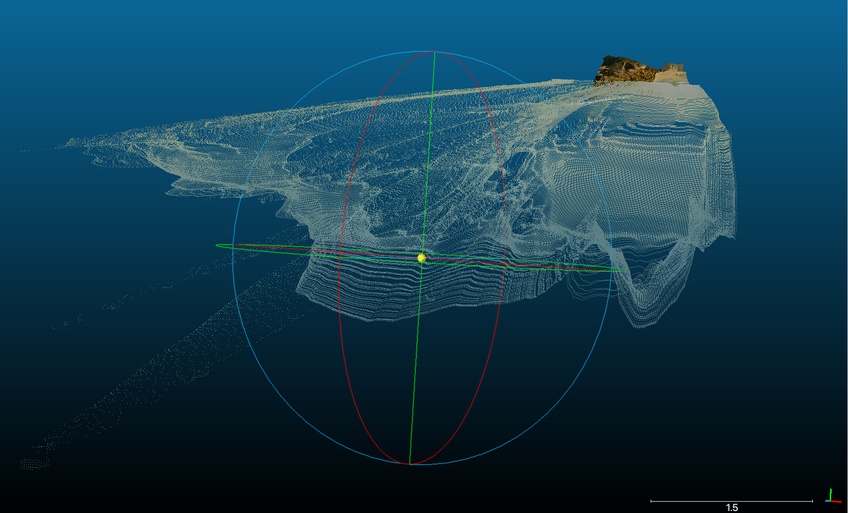

In order to create 3D landscapes, we convert flat images of surfaces and landscapes into a 3D cloud. 3D photography is a multi-layer representation of the original flat picture used to synthesize novel views of the same scene. The network generates hallucinated colored point clouds and depth in regions occluded in the original view of the photo.

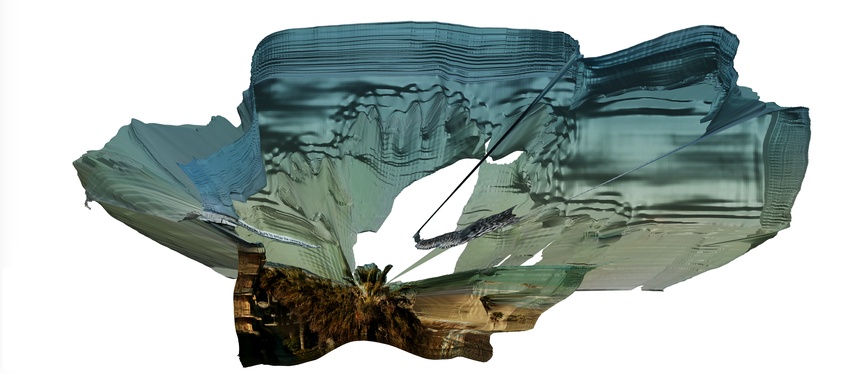

We analyze this point cloud in CloudCompare, and also process it in MeshLab to create surfaces and printable 3d objects, which represent the neural network's understanding of our nature and the environment, to which we are accustomed.

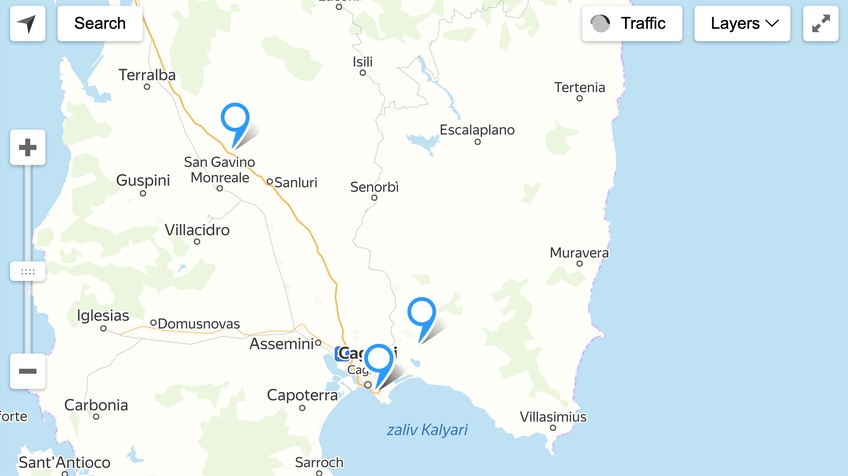

Source image and geographical position of surveillance camera.

Video with reconstructed landscape

Post-production of point cloud using Meshlab, Meshmixer.

Example 2

Source footage from camera

Post-production of dot cloud using Meshlab, Meshmixer

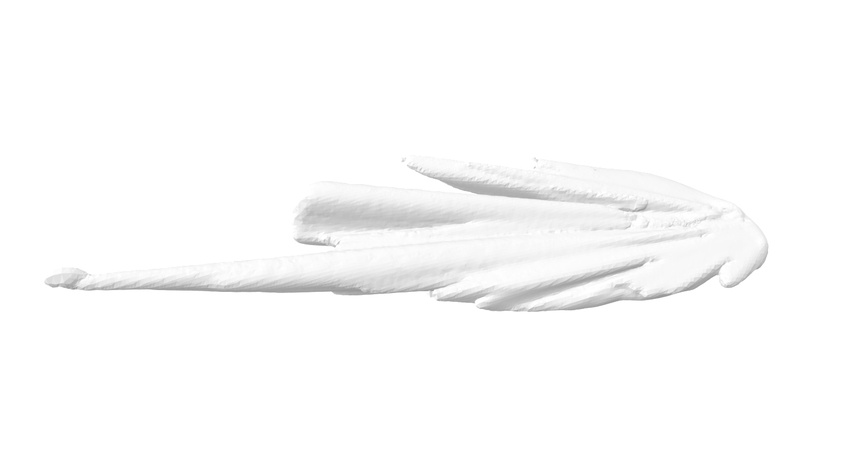

3d Printed Objects

From digital point clouds we can shift to 3d-printed reconctructions of these landscapes.

They pass through the permeable border of the real, come back to the natural surfaces, appear in our world in a strange sculptural form.

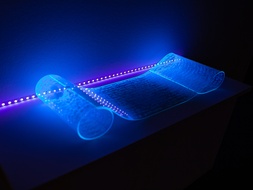

At the same time we have started experiments with putting portable nomadic AR scenes in public spaces. Our first AR attempts are accessible here: